Hilariously, Japan just discovered Myers-Briggs and it's super popular with the youths as a trending personality quiz. My friend asked me if I had seen "MBT" and (once I figured out WTF they were talking about) was floored when I told them about its origins note.com/yanotomoaki/n/nbb31a0e5604f

Shout for DANGER

Free idea for anyone who wants it.

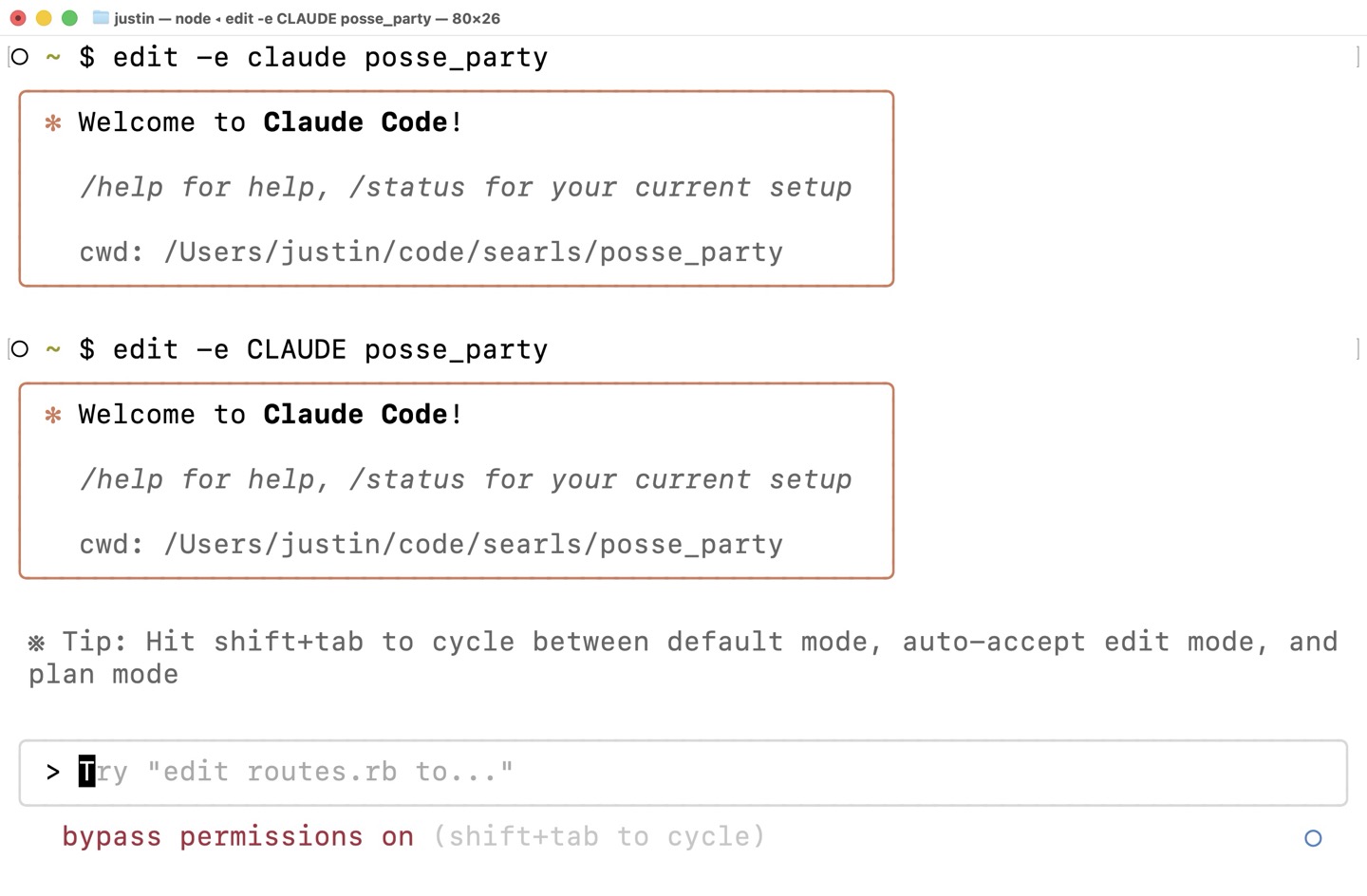

I've been juggling so many LLM-based editors and CLI tools that I've started collecting them into meta scripts like this shell-completion-aware edit dingus that I use for launching into my projects each day.

Because many of these CLIs have separate "safe" and "for real though" modes, I've picked up the convention of giving the editor name in ALL CAPS to mean "give me dangerous mode, please."

So:

$ edit -e claude posse_party

Will open Claude Code in ~/code/searls/posse_party in normal mode.

And:

$ edit -e CLAUDE posse_party

Will do the same, while also passing the --dangerously-skip-permissions flag, which I refuse to type.

A few days back, I linked to Scott Werner's clever insight that—rather than fear the mess created by AI codegen—we should think through the flip side: an army of robots working tirelessly to clean up our code has the potential to bring the carrying cost of technical debt way down, akin to the previous decade's zero-interest rate phenomenon (ZIRP). Scott was inspired by Orta Therox's retrospective on six weeks of Claude Code at Puzzmo, which Orta himself wrote after reading my own Full-breadth Developers post.

Blogging is so back!

If you aren't familiar with Brian Marick, he's a whip-smart thinker with a frustrating knack for making contrarian points that are hard to disagree with. He saw my link and left this comment on Scott's blog post about technical debt and ZIRP. The whole comment is worth reading and should have top-billing as a post in its own right, so I figured I'd highlight it here:

The problem with a ZIRP is that those questions are b-o-r-i-n-g and you can't compete with those who skip them. You're out of business before they crash. ("The market can remain irrational longer than you can remain solvent.")

Similarly, there's a collective action problem. Our society is structured such that when the optimists' predictions go wrong, they don't pay for their mistakes – rather society as a whole does. See housing derivatives in 2008, the Asian financial crisis of the late '90s, etc. ZIRP makes it cheaper to be an optimist, but someone else pays the bill for failure (Silicon Valley Bank, Savings and Loan crisis)

It's weird to see ZIRP touted as a model, given the incredible overspending that took place, which had to be clawed back once ZIRP went away. (Most notably in tech layoffs, but I'm more concerned about all the small companies that were crushed because of financials, not because of the merit of their products.)

Brian made me ashamed to admit that I had read Scott's post as an exclusively good thing, despite the fact that on a macro level, he's absolutely right: the excesses of irrational exuberance and their unfair consequences are definitely net-harmful to society. No argument there. Someone should absolutely get on that and, of course, literally no one will.

Why am I unbothered? Because as a customer, I am happy to ride a ZIRP wave for my own personal benefit. That way, even if the world burns in the end, at least I got something out of it. Last time around, I benefited from a shitload of free cloud compute, cheap taxi rides, subsidized meal services, and credit card reward arbitrage in the 2010s—even as I made sure to direct my investment portfolio towards businesses that actually, you know, made money. So it is today: the tech industry has made a nigh-infinite number of GPUs available at remarkably low prices, and I'm just some dipshit customer who is more than happy to allow investors to subsidize my usage. At the moment, I'm paying $200/month for Claude Max which admittedly feels like a bit of a stretch, until I check ccusage and realize I've burned over $4500 worth of API tokens in the last 30 days.

And, unreliable and frustrating as they may be, I'm still seeing a ton of personal value from the current crop of LLM-based tools overall. As long as that's the case, I suppose I'll keep doing whatever best assists me in achieving my goals.

Is any of this sustainable? Unlikely. Are we all cooked? Probably! But as Brian says, this is a collective action problem. I'm not going to be the one to fix it. And while I greatly admire the spirit of those who would gladly spend years of their lives as activists to also not fix it, I've got other shit I'd rather do.

My only real medium-to-long-term hope is that the local LLM scene continues to mature and evolve so as to hedge the possibility that the AI cloud subsidy disappears and all these servers get turned off. So long as this class of tools continues to be available to those who buy fancy Apple products, how I personally approach software development will be forever changed.

(h/t to Tim Dussinger for reminding me to link to Brian's commentary.)

I'm glad I pointed Scott to Orta's Claude post, because his analogy (God, why is this man so good at analogies?) comparing agentic coding to "ZIRP for technical debt" is A-fucking-plus thoughtleading. Jealous. worksonmymachine.ai/p/entering-technical-debts-zirp-era

Connect 4

This is a copy of the Searls of Wisdom newsletter delivered to subscribers on August 7, 2025.

I just realized that Christmas in July must have been held somewhere, and I missed it. Damn.

Regardless, the blog was busy since we last checked in:

- There's some Xcode stuff for Apple people, as well as the nostalgia of finding the order confirmation of my very first Mac, a 12" iBook G4

- We got in some good thoughtlording with a software design thinkpiece, an organizational design thinkpiece, and an AI thinkpiece

- Reflections on how bang-on my favorite apocalyptic short story set in 2026 turned out to be

- A couple podcasts, too (1, 2)

Also since I last wrote you, they held the final RailsConf, an event and community that had a huge impact on my career. I was honored that Aji Slater summarized my 2017 keynote on stage, even though I don't own a single pair of white pants:

As it happens, I've been chewing on a lot of the same themes I discussed back in that How to Program talk, because the current AI-induced industry shakeup we're experiencing has underscored the importance of taking ownership over how we work. And although I didn't plan this in advance, that's kind of exactly the topic I'm writing about today.

Of course, when I talk about work, I mean it in a quite expansive sense. For most intents and purposes, I retired at the end of 2023. I contend that I still do stuff, but increasingly nothing about my day resembles a traditional job. There is, however, one exception: I now have more meetings on my calendar as a retiree than I did as a full-time employee.

Today, I'll share the unlikely story of how my calendar started filling up again and the even unlikelier reality that I'm completely okay with it (happy, even).

Letting go of autonomy

I recently wrote I'm inspecting everything I thought I knew about software. In this new era of coding agents, what have I held firm that's no longer relevant? Here's one area where I've completely changed my mind.

I've long been an advocate for promoting individual autonomy on software teams. At Test Double, we founded the company on the belief that greatness depended on trusting the people closest to the work to decide how best to do the work. We'd seen what happens when the managerial class has the hubris to assume they know better than someone who has all the facts on the ground.

This led to me very often showing up at clients and pushing back on practices like:

- Top-down mandates governing process, documentation, and metrics

- Onerous git hooks that prevented people from committing code until they'd jumped through a preordained set of hoops (e.g. blocking commits if code coverage dropped, if the build slowed down, etc.)

- Mandatory code review and approval as a substitute for genuine collaboration and collective ownership

More broadly, if technical leaders created rules without consideration for reasonable exceptions and without regard for whether it demoralized their best staff… they were going to hear from me about it.

I lost track of how many times I've said something like, "if you design your organization to minimize the damage caused by your least competent people, don't be surprised if you minimize the output of your most competent people."

Well, never mind all that

Lately, I find myself mandating a lot of quality metrics, encoding them into git hooks, and insisting on reviewing and approving every line of code in my system.

What changed? AI coding agents are the ones writing the code now, and the long-term viability of a codebase absolutely depends on establishing and enforcing the right guardrails within which those agents should operate.

As a result, my latest project is full of:

- Authoritarian documentation dictating what I want from each coder with granular precision (in CLAUDE.md)

- Patronizing step-by-step instructions telling coders how to accomplish basic tasks, repeated each and every time I ask them to carry out the task (as custom slash commands)

- Ruthlessly rigid scripts that can block the coder's progress and commits (whether as git hooks and Claude hooks)

Everything I believe about autonomy still holds for human people, mind you. Undermining people's agency is indeed counterproductive if your goal is to encourage a sense of ownership, leverage self-reliance to foster critical thinking, and grow through failure. But coding agents are (currently) inherently ephemeral, trained generically, and impervious to learning from their mistakes. They need all these guardrails.

All I would ask is this: if you, like me, are constructing a bureaucratic hellscape around your workspace so as to wrangle Claude Code or some other agent, don't forget that your human colleagues require autonomy and self-determination to thrive and succeed. Lay down whatever gauntlet you need to for your agent, but give the humans a hall pass.

"There Will Come Soft Rains" a year from today

Easily my all-time favorite short story is "There Will Come Soft Rains" by Ray Bradbury. (If you haven't read it, just Google it and you'll find a PDF—seemingly half the schools on earth assign it.)

The story takes place exactly a year from now, on August 4th, 2026. In just a few pages, Bradbury recounts the events of the final day of a fully-automated home that somehow survives an apocalyptic nuclear blast, only to continue operating without any surviving inhabitants. Apart from being a cautionary tale, it's genuinely remarkable that—despite being written 75 years ago—it so closely captures many of the aspects of the modern smarthome. When sci-fi authors nail a prediction at any point in the future, people tend to give them a lot of credit, but this guy called his shot by naming the drop-dead date (literally).

I mean, look at this house.

It's got Roombas:

Out of warrens in the wall, tiny robot mice darted. The rooms were a crawl with the small cleaning animals, all rubber and metal. They thudded against chairs, whirling their moustached runners, kneading the rug nap, sucking gently at hidden dust. Then, like mysterious invaders, they popped into their burrows. Their pink electric eyes faded. The house was clean.

It's got smart sprinklers:

The garden sprinklers whirled up in golden founts, filling the soft morning air with scatterings of brightness. The water pelted window panes…

It's got a smart oven:

In the kitchen the breakfast stove gave a hissing sigh and ejected from its warm interior eight pieces of perfectly browned toast, eight eggs sunny side up, sixteen slices of bacon, two coffees, and two cool glasses of milk.

It's got a video doorbell and smart lock:

Until this day, how well the house had kept its peace. How carefully it had inquired, "Who goes there? What's the password?" and, getting no answer from lonely foxes and whining cats, it had shut up its windows and drawn shades in an old-maidenly preoccupation with self-protection which bordered on a mechanical paranoia.

It's got a Chamberlain MyQ subscription, apparently:

Outside, the garage chimed and lifted its door to reveal the waiting car. After a long wait the door swung down again.

It's got bedtime story projectors, for the kids:

The nursery walls glowed.

Animals took shape: yellow giraffes, blue lions, pink antelopes, lilac panthers cavorting in crystal substance. The walls were glass. They looked out upon color and fantasy. Hidden films clocked through well-oiled sprockets, and the walls lived.

It's got one of those auto-filling bath tubs from Japan:

Five o'clock. The bath filled with clear hot water.

Best of all, it's got a robot that knows how to mix a martini:

Bridge tables sprouted from patio walls. Playing cards fluttered onto pads in a shower of pips. Martinis manifested on an oaken bench with egg-salad sandwiches. Music played.

All that's missing is the nuclear apocalypse! But like I said, we've got a whole year left.

Video of this episode is up on YouTube:

I've made it! I'm over the hump! I'm actually writing* my language-learning app in Swift!

Send an email expressing how proud you are of me to podcast@searls.co. Or if there's any news worth following that isn't about AI. Too much AI stuff lately.

*And by "I'm writing", I admit Claude Code is doing a lot of the heavy lifting here.

Hyperlinks:

Scott Werner, who is frustratingly good at writing what I'm thinking about LLMs, has a new post out where he compares being an "agentic" coder to being an octopus, with each arm being a separate instance of Claude Code independently thinking and acting on its own. It's a good post and you should read it.

In the middle, he said the thing that was what first came to mind when I saw the image of the octopus in this context:

Here's the thing about teams now:

Two developers on one codebase is like two octopuses sharing one coral reef. Technically possible. Practically ridiculous. Everybody's arms getting tangled. Ink everywhere. The coral is screaming (coral doesn't scream, but work with me here).

But one octopus with eight projects? That's just nature.

The more time I spend with coding agents, the more I become convinced that they are damn-near incompatible with working in teams. I've suggested this before, but I really think more people should be chewing on this. The bottleneck for software teams—the thing that's always made them less than the sum of their parts—is the handshake problem. It's the one thing from The Mythical Man-Month everyone remembers: "Adding manpower to a late software project makes it later."

For the last 50 years, this has been (quite reasonably) understood as the number of humans on a team. That the number of relationships between those humans in an organization's design could be used to compute an approximate productivity tax on the collective's broader efforts to encode some kind of intention into software.

If we have 8 humans working on a software project, we have (8 ⨉ 7) ÷ 2 or 28 relationships to manage, with each individual capable of and burdened by the need to bidirectionally seek shared understanding and consensus with one another for the purpose of coordinating their efforts.

Now imagine the team having 8 humans each juggling 8 sub-agents on a project. This figure balloons to (64 ⨉ 63) ÷ 2 or 2,016 relationships. In the good ol' days of 2022, this quadratic increase in communication cost for squaring the size of the team was enough to give people pause on its own. But when ⅞ of the team are AI agents, it adds an all-new wrinkle to the math: 1,764 of those connections are unidirectional. The agents can receive information and instruction but they lack durable institutional memory, they can't pipe up in meetings, and each has its view of the world bottlenecked behind a single operator. Each of those complications has the opportunity to dramatically compound the already-really-quite-bad errors we typically associate with large software teams. This is made even worse by the fact that a manager has no observable signal that their team's composition has changed so radically—they'll walk into the room and see the same eight nerds staring at their computers as ever before.

My theory on why this issue hasn't already triggered productivity meltdowns is a happy accident of circumstance, owing to the fact that the people currently trailblazing multi-agent workflows in earnest are highly-engaged, driven programmers—the go-getting early adopters who were using Rails in 2005 and Node.js in 2009. As a result, the median team of eight engineers may not even have one such developer—which means we haven't seen what deploying coding agents at scale will really look like yet. My prediction is that as these tools continue to go mainstream, things are going to go about as well as if you were to throw a dozen octopuses into the same aquarium.

If none of this is quite clicking with you, think of it this way. Team A has 8 programmers in a room working on a project. Team B has 8 technical analysts each managing a separate sub-team of 8 offshore developers somewhere in South Asia, replete with all the time zone and communication constraints those impose. We have a lot of data to indicate that Team B is in for a bad fucking time, but that scenario is effectively what mainstream adoption of coding agents as they exist today would represent.

Anyway, if your entire team are working to keep their coding agents' hoppers full (replete with subagents and juggling multiple tasks at a time), what is your effective team size by this measure? Am I wrong here? Is everything actually going great? Is coordination not suddenly much harder than it was before? Let me know.

Shout-out to Orta for pulling on the "full-breadth developer" thread with such a concrete, detailed accounting of his agentic coding experiences blog.puzzmo.com/posts/2025/07/30/six-weeks-of-claude-code/

Consider this one of a thousand signposts I'll erect for the sake of anyone on the journey to becoming a full-breadth developer. What's discussed below is exactly the sort of thing that will separate the people who successfully wrangle coding agents from the people doomed to be replaced by them.

This post by Jared Norman about the order in which we design computer programs got stuck in my craw as I was reading it:

When you build anything with code, you start somewhere. Knowing where you should start can be hard. This problem contributes to the difficulty of teaching programming. Many programmers can't even tell you how they decide where they start. It turns out that thinking of somewhere you could start and starting there is good enough for a lot of people.

Relatable.

Back when people talked about test-driven development, I spilled a lot of ink discussing "bottom-up" (Detroit-school) versus "outside-in" (London-school) design and the benefits and drawbacks of each. Both because outside-in TDD was more obscure and because it's a better fit for most application development, I exerted far too much effort building mocking libraries and teaching people how to use them well.

Whether that work had any lasting impact, who's to say. It kept me busy.

In the broader scope of software development, the discussion of where the fuck to even start when programming a computer can take many forms. Most often, the debate comes down to type systems and the degree to which somebody is obsessed with or repelled by them.

At the end of the day, every program is just a recipe. Some number of ingredients being mixed together over some number of steps. The particular order in which you write the recipe doesn't really matter. Instead, what matters is that you think deeply and carefully consider your approach. The ideal order is whatever will prompt the right thought at the right moment to do the right thing to produce the right solution. The best approach is ever-changing. It will vary from person to person and situation to situation and will change from day to day.

But it's easier to tell people to follow a prescriptive one-size-fits-most solution, like to adopt London-school TDD or to use your type system.

Jared wraps with:

You can build any kind of structure with any kind of technique. Hell, you can write pretty good object-oriented code in C. You'll find no hard laws of computer science here. Your entrypoint into the problem you're solving doesn't decide how your system will be structured.

The order you tackle components merely encourages certain kinds of designs.

In programming, it's seen as a cop-out to inject oneself into a spirited debate between two sides and butt in to say that the real answer is "it depends." But one reason the question of, "where do we start?" has been so fundamental to my programming career—especially as someone who can suffer crippling Blank Page Syndrome—is that there actually is a right answer to the question. The hard part? The only way to arrive at that answer is to think it through yourself. No shortcuts. No getting around it. And if you want to get better over time? That requires deliberate and continuous metacognition—thinking about one's own thinking—to an extent that vanishingly few programmers will ever realize, much less attempt.

I made Xcode's tests 60 times faster

Time is our most precious resource, as both humans and programmers.

An 8-hour workday contains 480 minutes. Out of the box, running a new iOS app's test suite from the terminal using xcodebuild test takes over 25 seconds on my M4 MacBook Pro. After extracting my application code into a Swift package—such that the application project itself contains virtually no code at all—running swift test against the same test suite now takes as little as 0.4 seconds. That's over 60 times faster.

Given 480 minutes, that's the difference between having a theoretical upper bound of 1152 potential actions per day and having 72,000.

If that number doesn't immediately mean anything to you, you're not alone. I've been harping on the importance of tightening this particular feedback loop my entire career. If you want to see the same point made with more charts and zeal, here's me saying the same shit a decade ago:

And yes, it's true that if you run tests through the Xcode GUI it's faster, but (1) that's no way to live, (2) it's still pretty damn slow, and (3) in a world where Claude Code exists and I want to constrain its shenanigans by running my tests in a hook, a 25-second turnaround time from the CLI is unacceptably slow.

Anyway, here's how I did it, so you can too.

AppleCare One is a great deal if you like Apple's more expensive products. An iPad Pro ($10.99/mo), Vision Pro ($24.99/mo), and Pro Display XDR ($17.99/mo) somehow adds up to $19.99.

That's $33.98/mo cheaper than ala carte pricing. apple.com/applecare/

Did Satya write this for current and former employees or did Satya write this for Satya? blogs.microsoft.com/blog/2025/07/24/recommitting-to-our-why-what-and-how/

Finally, vindication. I've been calling bullshit on resting meat since I first heard of it. Get the meat to the right temp and shove it in your face while it's still hot. You can rest when you're dead. seriouseats.com/meat-resting-science-11776272