I'd do it all again

This is a copy of the Searls of Wisdom newsletter delivered to subscribers on November 25, 2025.

Hello! We're all busy, so I'm going to try my hand at writing less this time. Glance over at your scrollbar now to see how I did. Since we last corresponded:

- Dropped in on the Ruby AI podcast

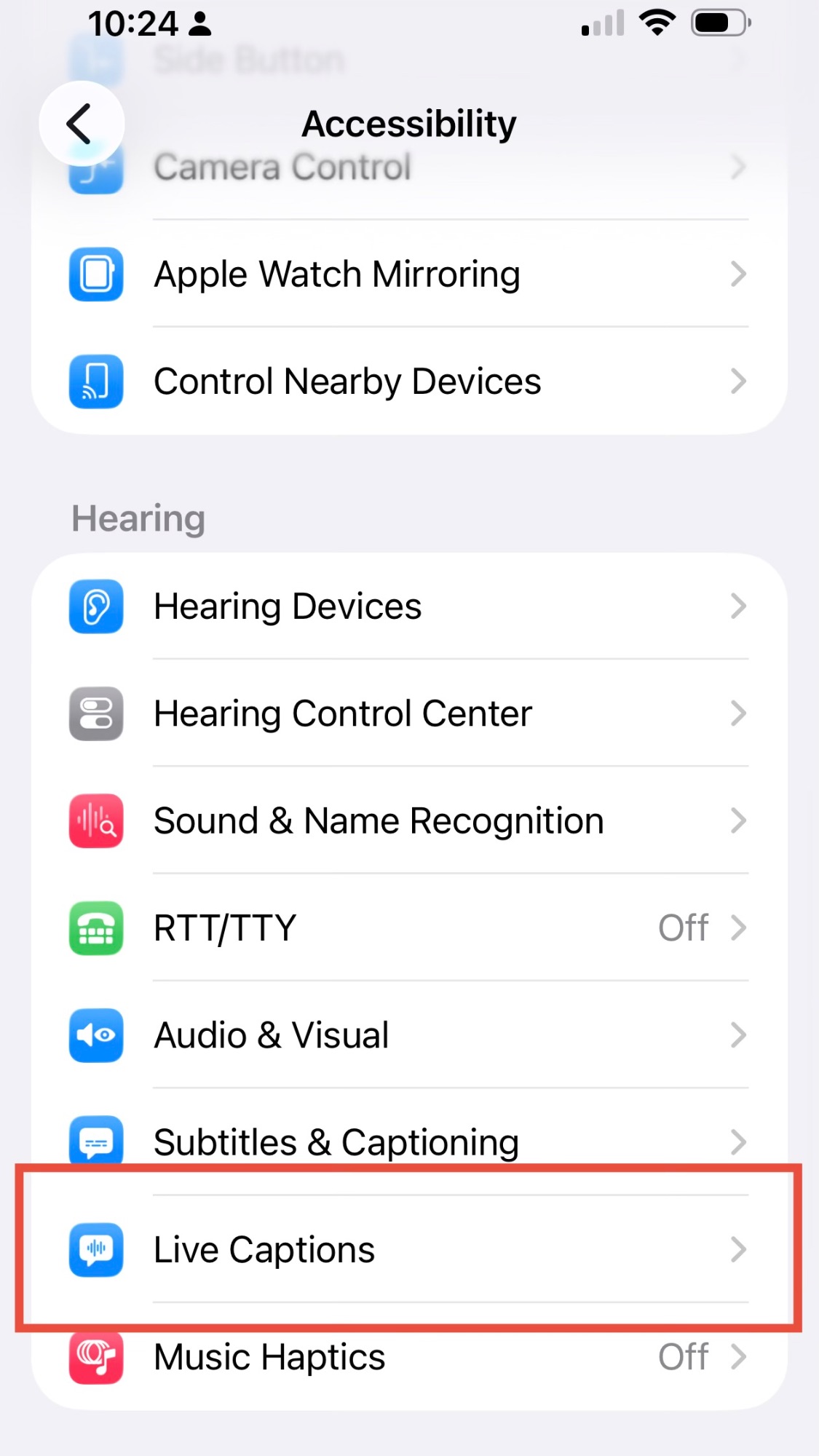

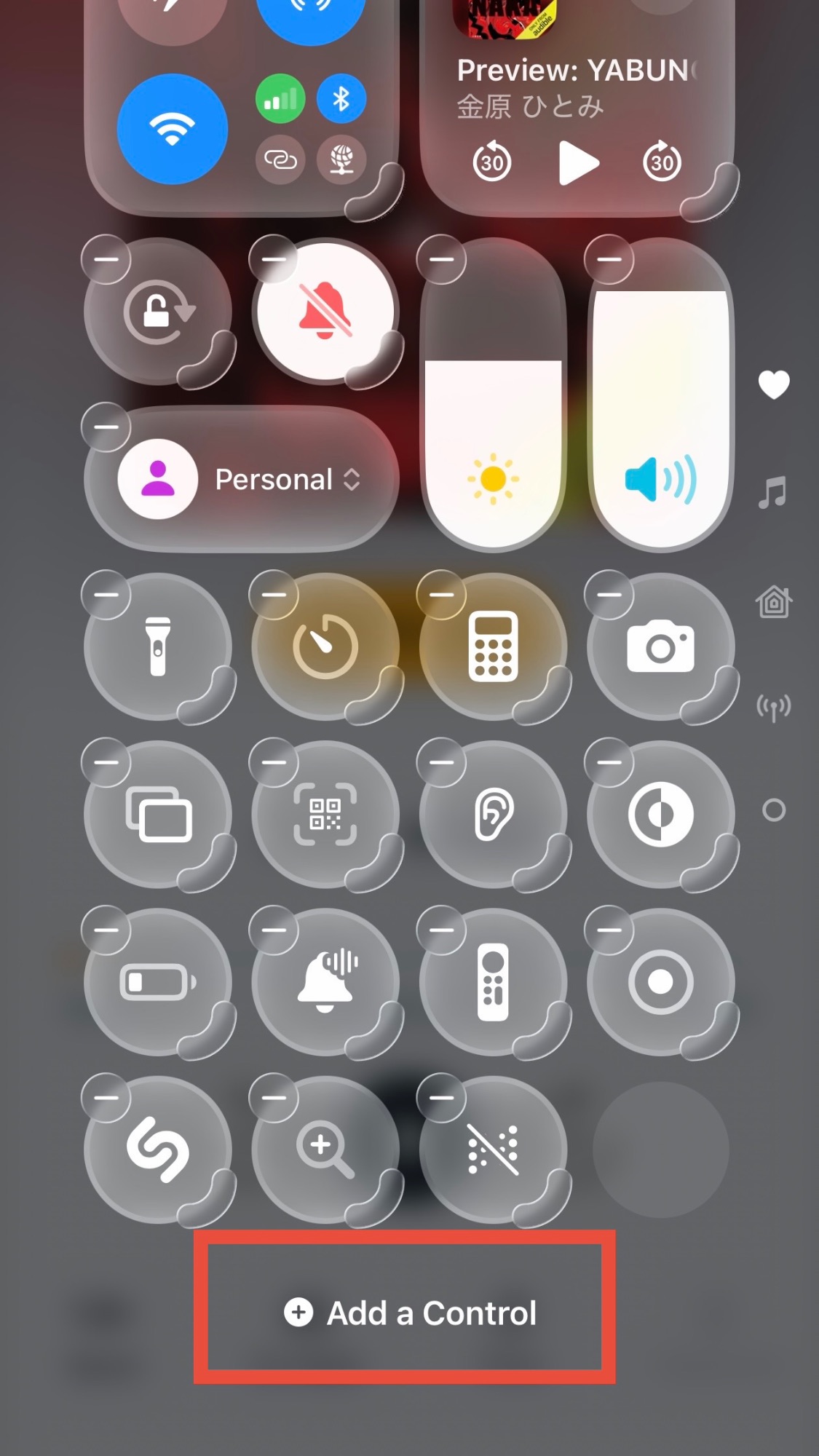

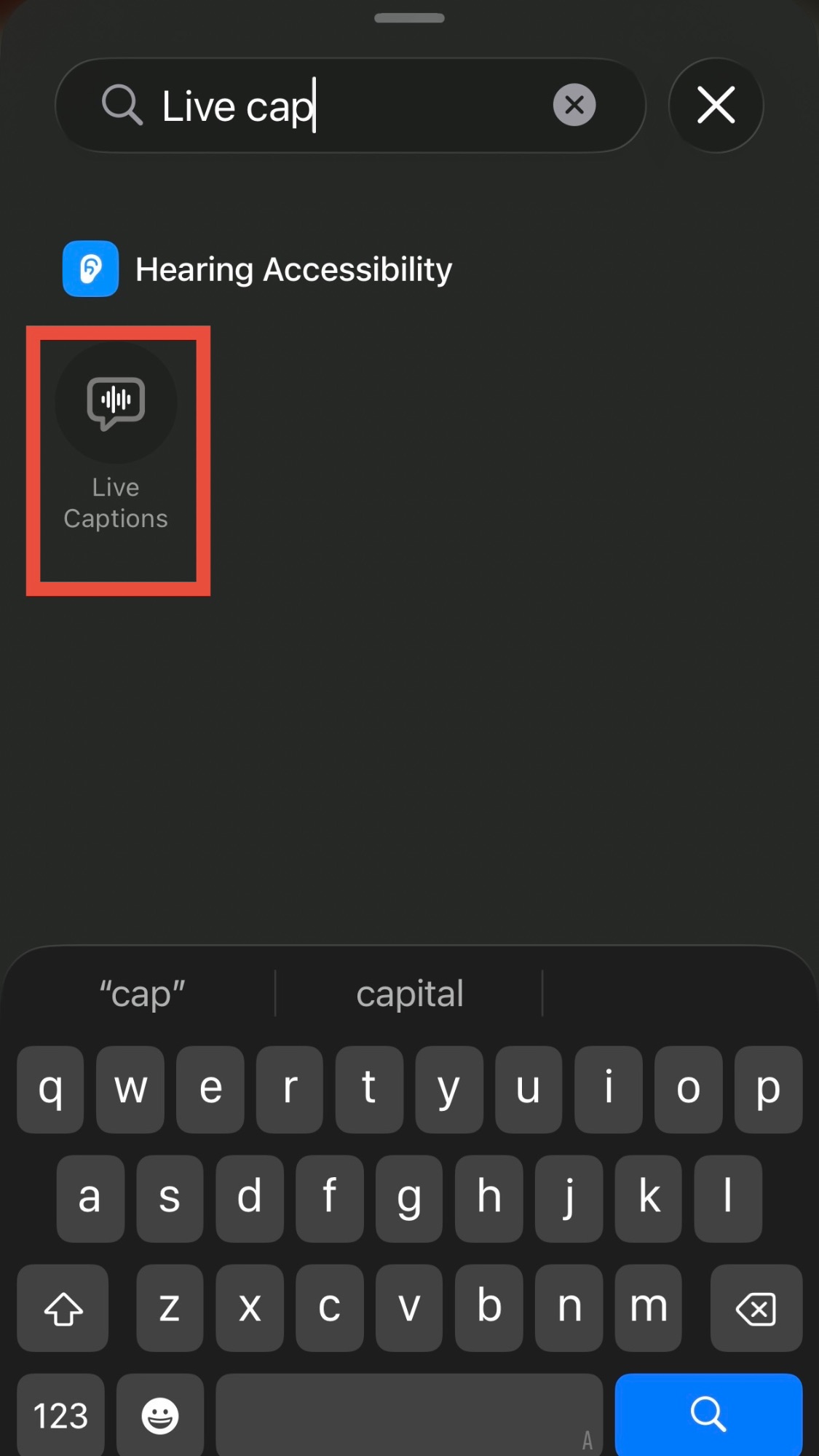

- Added a new cable to the increasing number of cables plugging my face into my computer, which shipped with some pretty glaring issues, some of which I talked about

- Found somebody else saying that, in the short term, AI codegen is going to dramatically increase the demand for software as the supply constraint on programming eases

- Made an open source library called Straight-to-Video that performs client-side remuxing and transcoding of videos, beating them into shape for upload via the Instagram, Facebook, and TikTok APIs

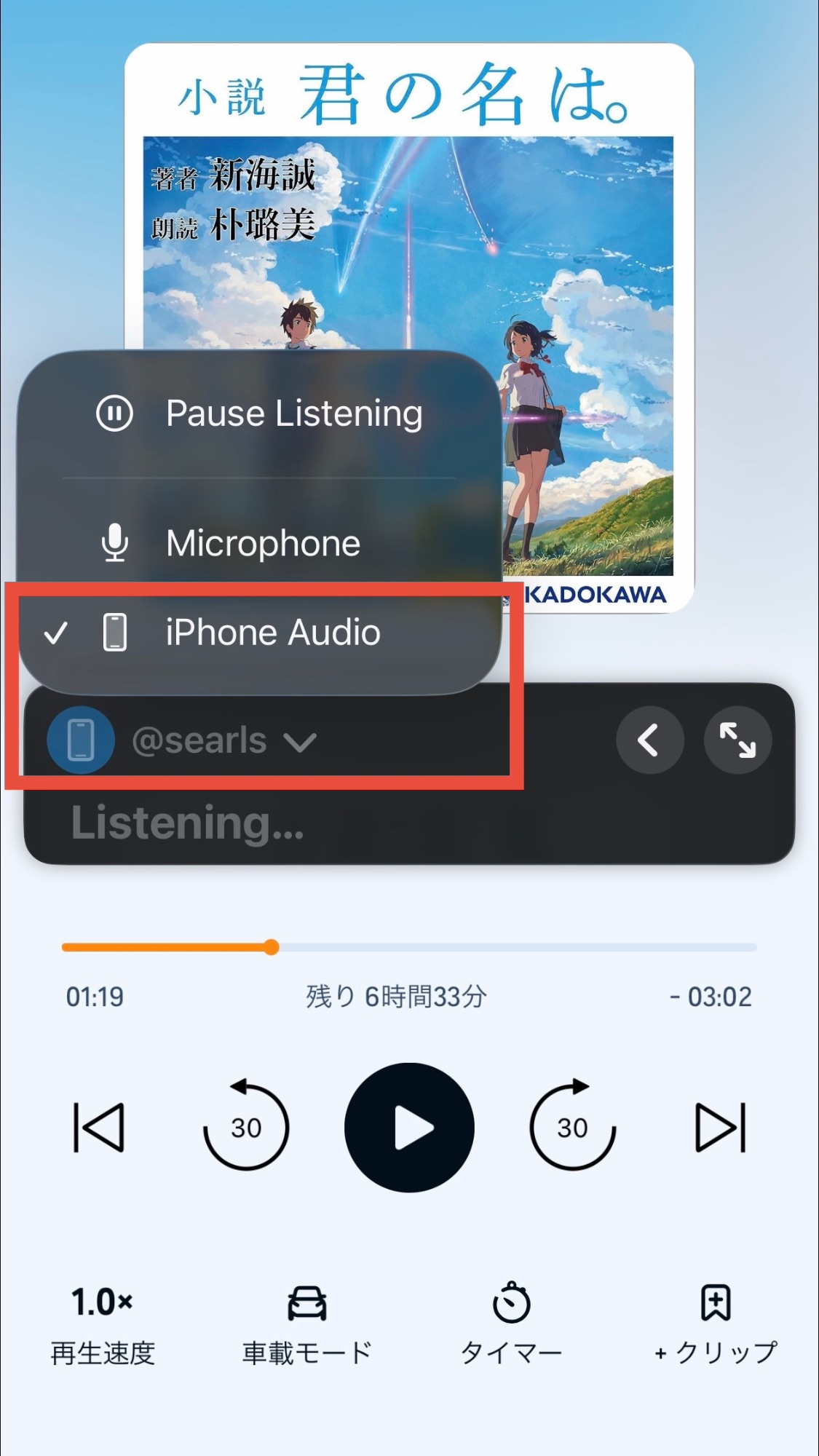

- Hosted my brother after he sold his house, which (of course) coincided with nonstop power and Internet outages. Rather than do something about it, I complained into my microphone

- Mourned the fact iPhone 18 Air apparently got cancelled or delayed to Spring 2027, continuing my losing streak of falling in love with Apple's least popular hardware

- Learned I have huge fucking turbinates, even relative to my already huge fucking head

My good friend Ken took me to the Magic game last night some number of nights ago. It was a great game because we were losing very badly, and then it became very close, and then, right at the end—we won! The classic comeback narrative arc was fulfilled. Sports!

I was reflecting on life the other day, which is a thing I do more often now that I'm firmly in Phase 3 of my evil plan to ride off into the sunset and gradually be forgotten by all of you.

My original plan for this essay would have pulled at the common thread that ties things like game design, derivatives trading, reality shows, and sports betting together. Unfortunately and unsurprisingly, it was taking me too long, and I'm now running out of time in November to give you a recap on what happened in October.

(By the way, don't be surprised if I just send you all a postcard for the December issue. I'm still new at running a monthly newsletter, and I'd prefer not to find out what happens when I fall more than a month behind. Feel free to demand a refund by replying to this message.)

So, anyway, like I said, my actual essay fell apart. Instead, I'm going to share a personal example of how a series of consequential decisions can paradoxically be both productive & rational, while simultaneously being costly & misguided.

I'd do it all again

It all started with one stray piece of unsolicited feedback.